The world of artificial intelligence is moving at a breakneck pace, but as we build faster, we often leave the back door wide open. Every time a developer pulls a pre trained model from a public repository or runs a … Read More

AI Red Teaming

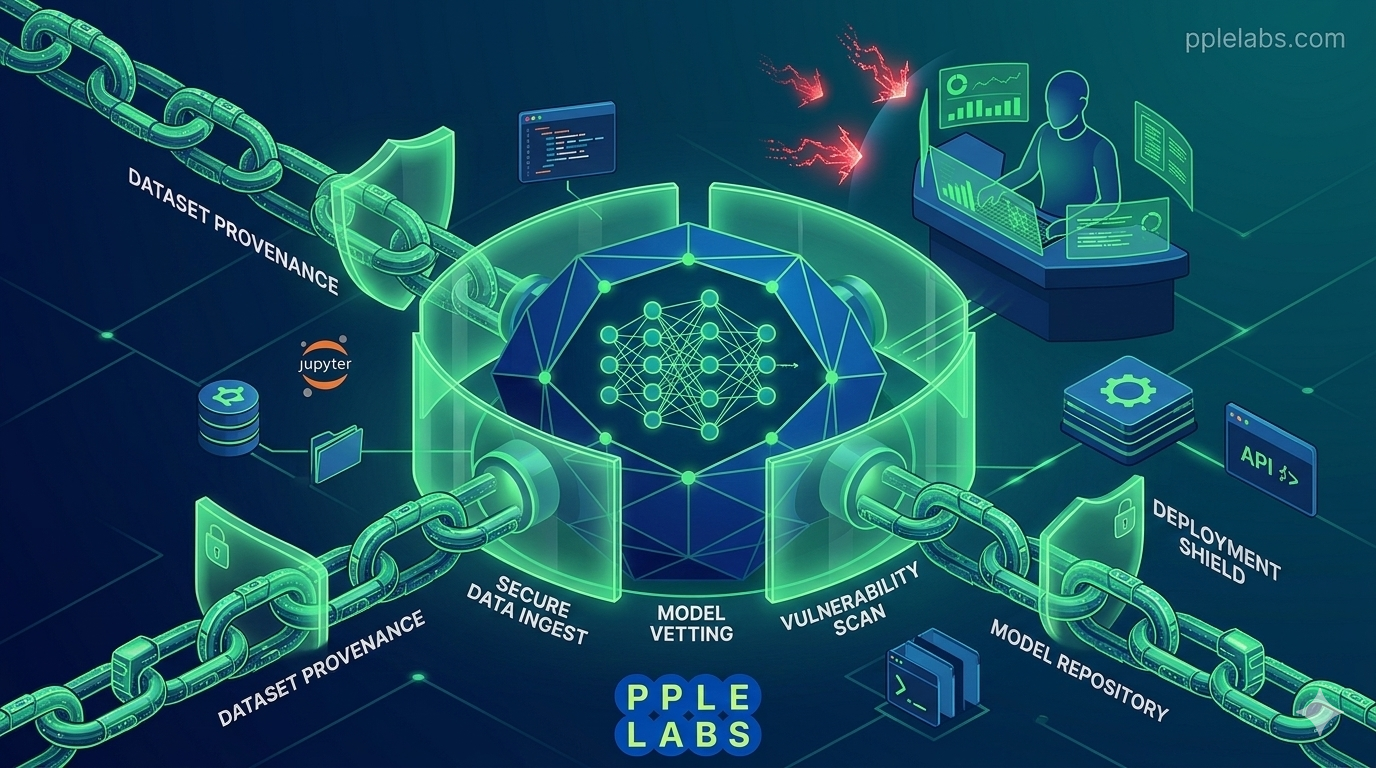

Protect AI : Securing the ML Supply Chain

Tags: AI Red Teaming, AI Security Posture Management, AI Vulnerability Scanning, AISPM, Cybersecurity 2026, Jupyter Notebook Security, LLM Security, machine learning security, ML Supply Chain Security, MLSecOps, Model Poisoning Prevention, PpleLabs, Prompt Injection Defense, Protect AI, Protect AI Guardian Review

HiddenLayer AI Security for Medical Models

The healthcare industry has entered a bold new era where Large Language Models (LLMs) assist in everything from clinical documentation to complex diagnostic reasoning. However, as these models become more integrated into patient care, they also become attractive targets for … Read More

Tags: adversarial AI attacks, AI model security 2026, AI Red Teaming, data poisoning protection, healthcare AI defense, HiddenLayer AI, HiddenLayer review, LLM safety guardrails, machine learning security, medical AI compliance, medical LLM protection, MLDR, Pplelabs healthcare, prompt injection prevention, securing medical AI

AI Red Teaming : Stress testing security for clinical models.

AI Red Teaming is the most critical safety net for modern medicine. Imagine a world where a digital doctor makes a life or death decision based on a hidden flaw in its logic. That sounds like a plot from a … Read More

Tags: Adversarial Machine Learning, agentic red teaming, AI Red Teaming, AI risk management, bias mitigation, clinical AI safety, Clinical Decision Support, Healthcare Cybersecurity, Healthcare Technology, hospital AI governance, jailbreaking prevention, LLM stress testing, Medical Device Security, medical model security, NIST AI framework