The Unseen Force Driving AI (Nvidia GPUs)

Have you ever stopped to think about what’s really happening behind the scenes when you ask a smart speaker a question or when your phone’s camera recognizes a face? It feels like magic, right? We see all these incredible advancements in artificial intelligence (AI), from self-driving cars to chatbots that can write a novel, but we often don’t see the engine that powers it all. That’s where AI hardware, and more specifically, Nvidia’s graphics processing units (Nvidia GPUs), come in. They are the unseen heroes, the foundational pieces of technology that make the AI revolution possible.

1.1. What is an AI Revolution? When we talk about an “AI revolution,” we’re not just talking about a new app or a cool gadget. We’re talking about a fundamental shift in how we solve problems and interact with technology. It’s a period of rapid, widespread change where machines are learning, adapting, and making decisions in ways we’ve never seen before. This isn’t just happening in sci-fi movies anymore; it’s here, and it’s impacting everything from healthcare and finance to entertainment and manufacturing.

1.2. The Central Role of Hardware in AI You see, an AI algorithm is just a set of instructions. To truly make it work, you need powerful hardware to execute those instructions at an astonishing speed. Think of it like this: you can have the most brilliant blueprints for a skyscraper, but without the right construction equipment, that building isn’t going to get built. In the world of AI, GPUs are that essential heavy-duty equipment. They provide the raw computational power needed to process massive amounts of data, which is the very food that AI models need to learn and grow.

1.3. A Brief History of Nvidia GPUs journey began with gaming. For years, their GPUs were the go-to for gamers who wanted to experience the most realistic, high-fidelity graphics. But something fascinating happened along the way. Researchers and developers discovered that the very architecture that made GPUs great for rendering complex graphics also made them perfect for another kind of task: parallel processing. This discovery was a game-changer. Suddenly, these graphics processing units were no longer just for games; they were the new engines for scientific computing and, eventually, the cornerstone of the AI revolution.

2. Understanding the GPU: More Than Just Graphics

Let’s break down why GPUs are so special. It’s easy to get confused, especially since the CPU (Central Processing Unit) has been the brain of our computers for so long. But the two are designed for very different jobs.

2.1. From Gaming to a New Frontier Back in the day, GPUs were built with one primary purpose: to handle the massive mathematical calculations required to render pixels on a screen. Every pixel on your display needs to be calculated and updated, and in a fast-paced video game, that means millions or even billions of calculations every second. The engineers at Nvidia designed the GPU with hundreds, if not thousands, of small, efficient cores to handle these tasks simultaneously. They were built for parallelism, doing many simple things at once. This very design is what made them so valuable for AI.

2.2. The Core Difference Between CPUs and GPUs Think of a CPU as a small team of highly skilled specialists. They can handle a wide variety of tasks, and they can do each one incredibly quickly. If you need to perform a single, complex calculation, the CPU is your go-to. On the other hand, think of a GPU as a massive army of general workers. They might not be as fast as a specialist on a single task, but they can work on thousands of tasks all at the same time. AI and machine learning tasks, which involve performing the same calculation over and over on different pieces of data, are perfectly suited for this kind of “army” approach.

2.3. The Power of Parallel Processing Parallel processing is the secret sauce. Instead of tackling a problem one step at a time (like a CPU often does), a GPU breaks the problem down into thousands of smaller, identical pieces and processes them all at once. This is what allows for the rapid training of deep neural networks. For example, when an AI is learning to identify a cat in a picture, it needs to perform similar calculations on every single pixel. A GPU can handle all those pixel calculations in parallel, dramatically reducing the time it takes to learn. To better understand this concept, you might want to read a bit about the role of other companies that focus on data and AI infrastructure, like Scale AI, which PPLE Labs discussed in a recent post.

3. The Crucial Role of Nvidia GPUs in AI and Machine Learning

Nvidia’s GPUs are the workhorses of the AI world. They handle two major phases of an AI model’s life: training and inference.

3.1. Training AI Models: A Computational Heavy-Lift Training an AI model is like teaching a child. You show it thousands upon thousands of examples and give it feedback. For a deep learning model, this means feeding it huge amounts of data and letting it adjust its internal parameters to get better at a task. This process, which involves complex matrix multiplications and vector operations, is incredibly demanding. Without GPUs, training a large neural network could take weeks or even months. With GPUs, we can complete these tasks in a matter of days or hours, accelerating the pace of innovation.

3.2. AI Inference: Putting the Models to Work Once an AI model is trained, it’s time to use it. This is called inference. For example, when you use a voice assistant, a trained AI model is analyzing your speech in real time to understand what you’re saying. While inference can be less computationally intensive than training, it still needs to happen very quickly, with minimal latency. High-performance GPUs are essential for this, especially in applications where quick decisions are critical, such as in self-driving cars or real-time fraud detection systems.

3.3. Key Nvidia Technologies for AI Nvidia hasn’t just made Nvidia GPUs for AI; they’ve built an entire ecosystem around them. The CUDA parallel computing platform is a prime example. CUDA allows developers to easily write code that can take advantage of a GPU’s massive parallel processing power. They’ve also developed Tensor Cores, specialized processors on their GPUs that are specifically designed to accelerate the matrix operations at the heart of deep learning. This specialized hardware is a key reason why Nvidia has maintained its leadership in AI hardware. For a great example of AI in action, you can check out a post on the PPLE Labs blog about Deepgram, a company advancing voice AI and real-time speech recognition.

4. Nvidia’s Dominance in the AI Data Center

While many of us think of GPUs in our personal computers, Nvidia’s real power lies in the data center. Data centers are the giant, interconnected server farms that power the internet and all the cloud services we use.

4.1. The Backbone of Modern Computing In today’s world, data centers are where the most powerful AI models are trained and deployed. Companies like Google, Amazon, and Microsoft rely on vast clusters of GPUs to handle the demanding workloads of machine learning. These data centers are the heart of the AI revolution, and Nvidia’s GPUs are their lifeblood. They provide the immense processing power required for everything from natural language processing to drug discovery and climate modeling.

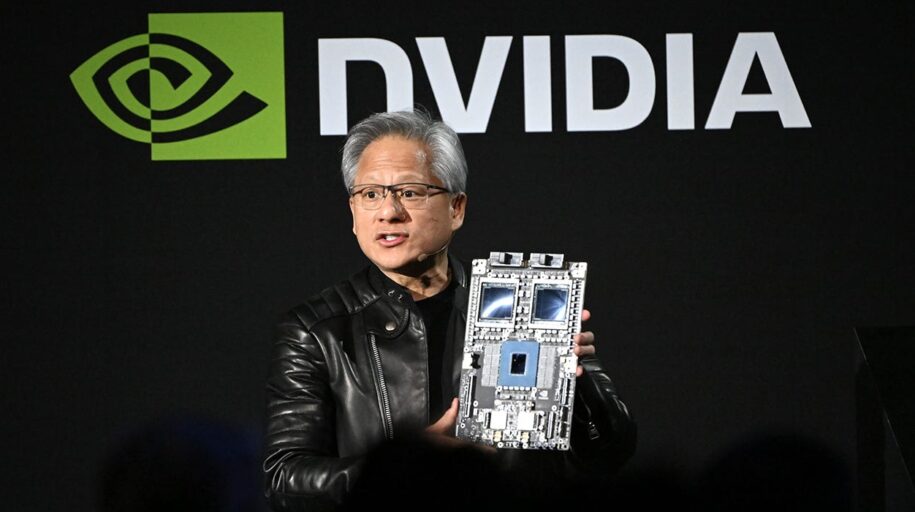

4.2. Specific Nvidia GPUs for AI and HPC Nvidia has a specific lineup of GPUs designed for these high-performance computing (HPC) environments. Their A100 and H100 Tensor Core GPUs, for example, are the gold standard in the industry. These Nvidia GPUs aren’t just powerful; they are built for scalability and efficiency, allowing for massive AI models to be trained across thousands of interconnected Nvidia GPUs. They are the tools that are pushing the boundaries of what’s possible with AI. This is a far cry from what was needed for healthcare cybersecurity in the pre-AI era, as another PPLE Labs article on proactive defenses explains.

4.3. The Importance of Data Centers for AI Hardware AI hardware, particularly Nvidia GPUs, thrives in the data center environment. This is because training large-scale AI models requires not just one or two powerful GPUs, but hundreds or even thousands working together in a cluster. The infrastructure of a data center provides the networking, power, and cooling needed to make this possible. The ability to deploy and manage these GPU clusters is a critical factor in a company’s ability to innovate in the AI space.

5. The Future of AI Hardware and Nvidia’s Position

The AI landscape is always changing, and Nvidia is constantly pushing the envelope. They know that to stay on top, they need to keep innovating.

5.1. The Ever-Evolving Landscape What’s next for AI hardware? We’re seeing a focus on even greater energy efficiency, higher memory bandwidth, and more specialized processors that can handle specific types of AI tasks. The goal is to make AI faster and more accessible. New architectures and chip designs are constantly being developed to meet the exploding demand for computational power. You can read more about how AI is impacting various fields in our post on Perplexity AI.

5.2. New Innovations on the Horizon Nvidia is already looking ahead. They’ve introduced their Grace Hopper “superchip,” which combines a CPU and a GPU on a single chip to improve performance and efficiency for HPC and AI workloads. They are also investing heavily in software, making their platform even more powerful for developers. This holistic approach, combining cutting-edge hardware with a robust software ecosystem, is a major reason for their success.

5.3. A Look at the Competition Of course, Nvidia isn’t the only player in the game. Competitors like AMD and Intel are also making significant strides in the AI hardware market. AMD’s Instinct series is a formidable challenger, and Intel is leveraging its long history in chip manufacturing to develop its own AI accelerators. Additionally, large tech companies like Google and Amazon are creating their own custom chips, such as Google’s TPUs, to power their in-house AI services. While the competition is heating up, Nvidia’s first-mover advantage and deep integration into the developer community give it a strong lead. For more on the competition, you can read this article from SingSaver. We also have some great content on the broader topic of AI in healthcare, such as our article on Top Cybersecurity Risks Facing AI-Driven Healthcare Systems.

Conclusion

Nvidia’s journey from a gaming hardware company to the undisputed leader of the AI revolution is a fascinating story of foresight and innovation. By recognizing the parallel processing power of their graphics processing units, they unlocked a new world of possibilities for artificial intelligence. From training complex models in massive data centers to powering real-time inference in our everyday devices, Nvidia’s GPUs are the engine behind the most transformative technology of our time. They are not just selling chips; they are providing the foundational building blocks for a future where intelligent machines can solve some of humanity’s most challenging problems. If you’re interested in how this type of technology is impacting the digital health space, you might also find our article on The IoMT Imperative to be a great resource.

FAQs

1. Why are GPUs so much better than CPUs for AI? GPUs are designed with a parallel architecture, meaning they have thousands of small, efficient cores that can perform many calculations at the same time. This is perfect for the repetitive, data-intensive tasks required to train AI models. CPUs, in contrast, have fewer, more powerful cores that are better suited for sequential, complex tasks.

2. What is the difference between training and inference in AI? Training is the process of teaching an AI model by feeding it massive amounts of data so it can learn patterns and improve its accuracy. Inference is the process of using that already trained model to make predictions or decisions on new data. GPUs are crucial for both.

3. What is CUDA? CUDA is a parallel computing platform and programming model developed by Nvidia. It allows developers to use a GPU’s parallel processing power for general-purpose computing, which is essential for AI development. It’s the software that unlocks the hardware’s potential.

4. Does Nvidia have any competitors in the AI hardware space? Yes, several companies are competing with Nvidia. Advanced Micro Devices (AMD) and Intel are major players with their own AI accelerator chips, and large technology companies like Google and Amazon are developing custom chips for their own internal use.

5. How important are data centers to Nvidia’s business? Data centers are extremely important. They are the primary customers for Nvidia’s high-end, powerful GPUs designed for AI and high-performance computing. The growth of cloud services and large-scale AI models means that demand for data center hardware is constantly increasing.

Leave a Reply