You’ve probably heard the buzz about Artificial Intelligence transforming healthcare, promising everything from faster cancer detection to highly personalized treatment plans. It’s an exciting future, yet a massive roadblock stands in the way: patient data. Training powerful AI models requires huge, diverse datasets, but that sensitive medical information known as Protected Health Information (PHI) is locked away behind firewalls and strict privacy laws like HIPAA and GDPR. This is where a revolutionary approach called Federated Learning steps in. It’s the secure key that unlocks the combined knowledge of global medical data, all without ever compromising patient confidentiality. We’re talking about a game changer that allows AI to learn from protected data while keeping that data exactly where it belongs: safe and sound in its original location.

1. The Data Dilemma: Why Centralized Learning Fails with Patient Records

Before we dive into the brilliance of Federated Learning, let’s acknowledge the elephant in the room. Why can’t we just pool all the patient data into one massive cloud server and train a model the old fashioned way?

1.1. The Strict Regulations Governing Patient Data

The number one reason is legal and ethical compliance. In the United States, the Health Insurance Portability and Accountability Act (HIPAA) imposes severe penalties for mishandling patient records. Across the pond, the European Union’s General Data Protection Regulation (GDPR) is just as tough. These laws were created for a good reason: to protect our most personal information. This regulatory environment makes the idea of “data sharing” almost impossible for hospitals and clinics. Therefore, any AI innovation in healthcare simply must be privacy preserving. To manage this sensitive landscape effectively, a thorough understanding of these requirements is non negotiable, as we discuss in our post on the HIPAA Compliance checklist and implementation Guide.

1.2. The Problem of Data Silos and Biased Models

Even if the legal hurdles could somehow be magically overcome, centralizing data creates a technical issue known as “data silos.” Hospitals, research institutes, and clinics operate independently. The patient population at a major urban medical center will be vastly different from a rural community hospital. If a model is only trained on data from one silo, it becomes biased and performs poorly when applied elsewhere. You might build an excellent cancer detection model for Hospital A, but it could fail miserably at Hospital B because the demographics or imaging equipment are different. This simply isn’t an acceptable standard for life saving technology. Moreover, preparing this disparate data for central use is a massive undertaking, which we’ve covered in detail in Healthcare Data for LLMs: Prepare Information for Compliance.

2. Understanding Federated Learning: A Privacy First Approach

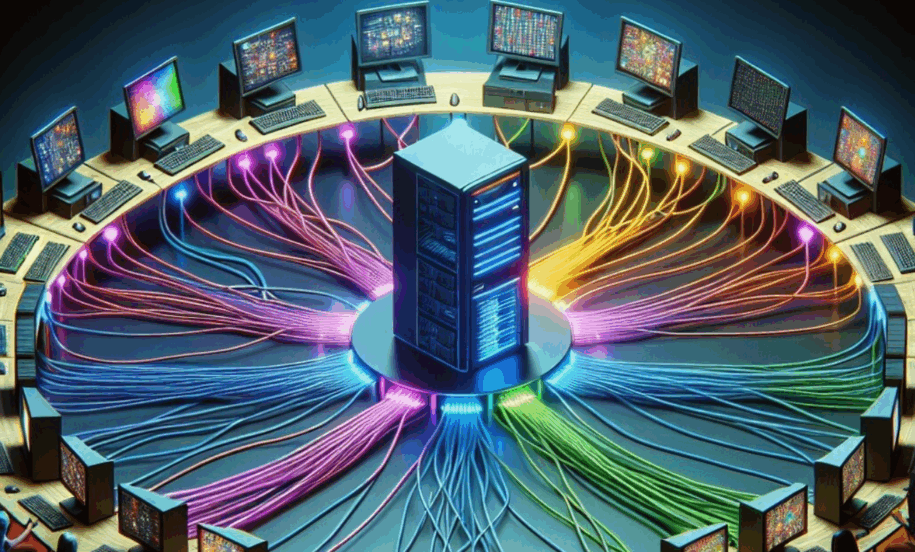

Federated Learning (FL) is a distributed machine learning approach introduced by Google in 2016. Think of it as teamwork for AI models. It’s fundamentally about enabling a collaborative effort across multiple data owners like hospitals, where the machine learning model is shared, but the confidential patient data never moves. The concept solves the “data to model” versus “model to data” problem.

2.1. The Core Mechanism of Federated Learning

Instead of bringing all the sensitive patient data to a central cloud server, FL does the opposite: it brings the AI model to the data. Each participating hospital, or “client,” downloads a shared, preliminary version of the AI model. They then train this model locally using their own, protected patient data. The raw data never leaves the hospital’s secure server. Once the local training is complete, the hospital doesn’t send its raw data back; instead, it sends back a small, encrypted summary of the changes, or “model updates,” that resulted from the training. A central server then aggregates all these updates from all participating hospitals to create a more robust, global Federated Learning model.

2.2. A Simple Analogy for Federated Learning

Imagine you and a group of friends are trying to perfect a recipe, but none of you can share your secret spice cabinet. That’s what’s happening here. Instead of sharing your whole spice cabinet (the patient data), you all agree to use a common, starting recipe (the global model). Each of you cooks the recipe in your own kitchen (local training), adjusts the seasonings based on your own secret ingredients (the patient data), and then, instead of sharing the spices themselves, you only share a note saying, “I added 1/4 teaspoon more salt and a dash of pepper.” The central server takes everyone’s notes and creates a new, improved common recipe for the next round. Everyone benefits from the collective wisdom, yet everyone’s secret spice cabinet (the protected patient data) remains completely private. This is the simple elegance of Federated Learning. The result is a much better final product because it has been tested and refined by a far more diverse set of “spices.”

3. The Step by Step Process of Federated Learning in Healthcare

So, how does this collaborative training process actually work in a highly regulated hospital environment? It’s a cyclical process that leverages encryption and aggregation to ensure security and model performance.

3.1. Initialization and Distribution: The Global Model’s Journey

The journey of Federated Learning starts with a central server often managed by a trusted third party or a consortium which creates an initial version of the AI model, known as the global model. This model, which could be anything from a neural network for image recognition to a predictor for hospital readmissions, is then securely distributed to all participating healthcare institutions. Security is a key consideration from the start, and new technologies, including those we discuss in our post on Top 5 AI Cybersecurity Tools Safeguarding Healthcare, are continuously being integrated.

3.2. Local Training on Protected Patient Data

Upon receiving the global model, each hospital begins local training. The model learns from the hospital’s unique and proprietary dataset. This is the critical privacy step: the raw patient records, medical images, or genomic sequences are never transmitted, viewed, or stored outside the hospital’s secure, regulated environment. The model training happens strictly behind the local firewall, making it compliant with regulations like HIPAA. This process is highly efficient and is compatible with the latest advancements in AI and Machine Learning, which we explored in AI & Machine Learning: The Personalized Healthcare Revolution.

3.3. Aggregation and Optimization: Creating a Better Global Federated Learning Model

Once local training is complete, the client hospital sends a small, encrypted update the difference between the starting and ending model parameters back to the central server. The server’s job is to take all these updates from every participant and use a special aggregation algorithm, commonly FedAvg (Federated Averaging), to combine them into a single, improved global model. The aggregation process averages the contributions, which also acts as a powerful privacy mechanism, since any single patient’s data contribution is mathematically obscured within the combined updates of hundreds or thousands of others. This is how the collective intelligence is harnessed while protecting individual patient privacy, leading to a truly collaborative intelligence, a concept further detailed by this authority post on federated learning architecture.

4. The Real World Impact of Federated Learning

The potential for Federated Learning in medicine is staggering because it directly addresses the biggest challenge in AI research: access to high quality, diverse data.

4.1. Accelerating Rare Disease Research and Drug Discovery

Rare diseases are a perfect example of the data silo problem. By their very definition, no single hospital treats enough patients to build an effective AI model. However, when a dozen institutions around the world can collaboratively train a Federated Learning model without sharing a single patient’s file, they can finally gather the statistical power needed to identify genetic markers, predict disease progression, and discover new drug targets. This collaborative mechanism is a lifeline for personalized medicine, pushing forward innovations like AI Digital Twin: Personalized medicine and treatment simulation (Internal Link 5). This acceleration of research is a significant advantage, as further explored by this authority post on collaborative research.

4.2. Improving Medical Imaging Diagnostics with Federated Learning

Medical imaging, such as MRIs, CT scans, and X-rays, is the most mature application of Federated Learning. Hospitals often use different scanners and imaging protocols, which can make a model trained on one hospital’s images useless at another. FL allows hospitals to collaboratively train models for tasks like tumor segmentation or cancer detection. Since the model learns from diverse equipment and patient demographics globally, the final model is far more accurate and generalizable. It’s like creating an AI specialist whose knowledge isn’t limited to one clinic but encompasses the best practices from around the world. For example, a model trained with FL can more accurately segment brain tumors from MRIs, leading to faster and more reliable diagnoses, as evidenced by a comprehensive systematic review on federated learning in health. The improved efficiency in diagnostics aligns with the benefits seen in other AI applications, like using Abridge: AI Medical Scribe for Enhanced Healthcare Efficiency (Internal Link 6).

5. Conclusion: A New Era of Collaborative and Secure AI

The promise of AI in healthcare is no longer a distant dream, but a secure reality thanks to Federated Learning. By flipping the script and bringing the model to the data, this distributed approach has successfully broken down the long standing barriers of privacy, regulation, and data silos. It is a win win technology: patients benefit from more accurate, less biased, and globally informed AI diagnostics and treatments, all while their most sensitive data remains securely under lock and key. As more institutions adopt this framework, we are heading towards a truly collaborative global medical community where the collective intelligence of the world’s healthcare data can finally be put to work, safely and ethically. This shift to decentralized training is already proving vital, as highlighted in this authority post on data governance.

Frequently Asked Questions (FAQs) about Federated Learning

- Q1. How does Federated Learning maintain patient privacy if the model is shared? The privacy is maintained because the raw patient data never leaves the local institution’s server. Only the model updates the aggregated mathematical changes to the model’s parameters are sent to the central server. These updates are typically encrypted and combined with updates from many other sites, making it incredibly difficult to reverse engineer and identify any individual patient’s contribution.

- Q2. Is Federated Learning the same as simply anonymizing the data? No, they are different. Data anonymization (or de identification) attempts to remove identifying information from patient records, but it’s an imperfect process and researchers have shown that de identified data can sometimes be re identified. Federated Learning goes a step further by never moving the data in the first place, regardless of whether it’s anonymized, offering a much higher guarantee of privacy.

- Q3. What are the biggest challenges currently facing Federated Learning adoption in healthcare? The main challenges are technical and organizational. Technically, there are issues with statistical heterogeneity (where the data distribution across different hospitals is vastly different) and system heterogeneity (differences in hardware and network speed). Organizationally, establishing a common protocol, ensuring data quality across sites, and creating the necessary governance agreements between competing hospitals can be complex.

- Q4. Can a malicious actor reverse engineer the patient data from the model updates? While the aggregation process offers strong privacy protection, sophisticated attacks, known as “model inversion attacks,” can theoretically extract some information from the model updates. To counteract this, FL systems often incorporate additional cryptographic privacy techniques, such as Differential Privacy or Secure Multi Party Computation, which add random “noise” to the updates or keep them encrypted even during aggregation.

- Q5. What kind of AI models can be trained using Federated Learning? Federated Learning is highly versatile and can be used to train many types of machine learning models. The most common applications in healthcare involve deep learning models (like convolutional neural networks for medical imaging) and models based on Electronic Health Records (EHRs) data for predictive tasks like disease prognosis or length of hospital stay.

Leave a Reply